At its core, human-in-the-loop machine learning is a partnership between human intelligence and AI algorithms. It’s not about machines taking over; it's about making them smarter, more accurate, and more reliable by injecting human expertise right into the training process.

Think of it like this: you have a brilliant AI apprentice who can sift through mountains of data in seconds. But this apprentice lacks real-world wisdom, common sense, and the ability to understand subtle context. The human-in-the-loop (HITL) approach pairs this apprentice with a seasoned human expert. Together, they create a system that’s far more powerful than either could be alone.

What Is Human-in-the-Loop Machine Learning?

Human-in-the-loop isn't just about calling in a person to fix a model after it messes up. It's a proactive strategy baked into the model's lifecycle from the very beginning. It establishes a continuous feedback cycle where the machine does the repetitive, heavy lifting, and people step in precisely when their unique cognitive skills are most needed.

This approach gracefully handles the tasks that trip up even the most sophisticated algorithms. It’s the key to building AI that you can actually trust with complex, real-world problems.

The Core Idea: A Partnership, Not a Replacement

Let's be clear: the goal here is to augment human capabilities, not make them obsolete. HITL is built on the simple truth that machines and people are good at different things. AI is a beast when it comes to speed, scale, and finding patterns in massive datasets. Humans, on the other hand, are masters of:

- Nuance and context: We can easily spot sarcasm in a product review or understand the true intent behind a customer's vague question.

- Edge cases: People are great at handling the weird, one-in-a-million scenarios that a model has never been trained on.

- Domain expertise: A doctor can interpret a confusing medical scan, or a financial analyst can spot a novel type of fraud—judgment calls that require deep experience.

This collaborative dynamic ensures that the AI's power stays grounded in real-world complexity and aligned with what we actually want it to do.

By combining the raw computational power of machine learning with the nuanced judgment of human experts, HITL bridges the gap between theoretical models and practical, reliable applications.

How This Collaboration Builds Smarter AI

So how does it actually work? The HITL process is a constant cycle of learning and refinement.

It starts with a model trained on an initial dataset, which is often labeled by humans. The model then gets to work making predictions on new, unseen data. Whenever it encounters something it’s not sure about—when its confidence score drops below a certain threshold—it doesn't just guess. Instead, it flags the problem and passes it over to a human for review.

A human expert then examines the case, corrects any mistake, provides the correct label, or clarifies the ambiguity. This high-quality, human-verified data point is then fed back into the model for retraining.

Each correction makes the AI a little smarter. Over time, the model learns from this expert feedback and becomes more self-sufficient, requiring less and less human help on similar tasks. This is what makes human-in-the-loop machine learning so effective—it allows models to grow and adapt with experience, just like people do, turning them into truly valuable assets for a business.

Why Humans Are Still the Secret Sauce for Modern AI

With AI systems getting smarter by the day, it’s fair to ask: why do we still need people in the picture? The answer boils down to the simple fact that algorithms don't understand things the way we do. They are pattern-matching machines, pure and simple.

Even the most sophisticated model can’t detect the dry sarcasm in a product review, weigh a tricky ethical dilemma, or grasp the cultural context that gives words their true meaning. These aren't just edge cases; they're huge blind spots where an AI can go completely off the rails. This is where human intelligence acts as the essential reality check, providing the common sense and contextual awareness that machines lack.

The "Garbage In, Garbage Out" Problem

A massive headache in machine learning is data quality. Your model is only ever as good as the data it’s trained on, and real-world data is often messy. It’s frequently incomplete, riddled with errors, or—worst of all—packed with historical biases.

If you train a hiring algorithm on company data that shows a pattern of favoring men for leadership roles, the AI will learn that bias and start recommending men. It isn't being malicious; it’s just doing its job of finding patterns, without any sense of fairness.

This is where human-in-the-loop machine learning becomes your best defense. People can spot and correct these biases in the training data, ensuring the model learns from information that's fair and representative. To get a better handle on this critical first step, see our breakdown on why data annotation is critical for AI startups in 2025.

Making Experts Even Better

The real power of AI in business isn't about replacing people—it's about giving them superpowers. Imagine a radiologist using an AI tool to scan thousands of MRIs. The AI can instantly highlight suspicious areas that a human eye might overlook after a long day, but the final call still comes from the doctor. That expert can weigh the AI’s suggestion against the patient’s complete medical history and other nuanced factors.

This kind of partnership is the best of both worlds. You get the machine's incredible speed and ability to work at a massive scale, combined with the irreplaceable depth of human expertise. The result is a system that delivers faster, more accurate, and more reliable outcomes.

In high-stakes fields like healthcare, finance, and autonomous systems, human judgment isn't just a benefit—it’s a non-negotiable requirement for safety, accountability, and public trust.

The AI revolution hasn't made humans obsolete. If anything, it’s made the human-in-the-loop approach more crucial than ever for building responsible and effective systems. By pairing human oversight with machine intelligence, we can create AI that is not only powerful but also fair, safe, and aligned with our values.

Don't think of human-in-the-loop machine learning as a single event. It's really more of a continuous cycle, a partnership between a machine and a person. The best way to picture it is to imagine an AI model as a bright-eyed student and a human expert as their trusted teacher. The whole point is to help that student learn on the job, get more confident, and eventually make fewer mistakes.

This whole "teaching" process isn't random; it follows a well-defined workflow designed to make the learning as efficient as possible. It’s a dynamic loop where the AI does the heavy lifting, but the human expert jumps in at just the right moments to offer guidance, corrections, and crucial context.

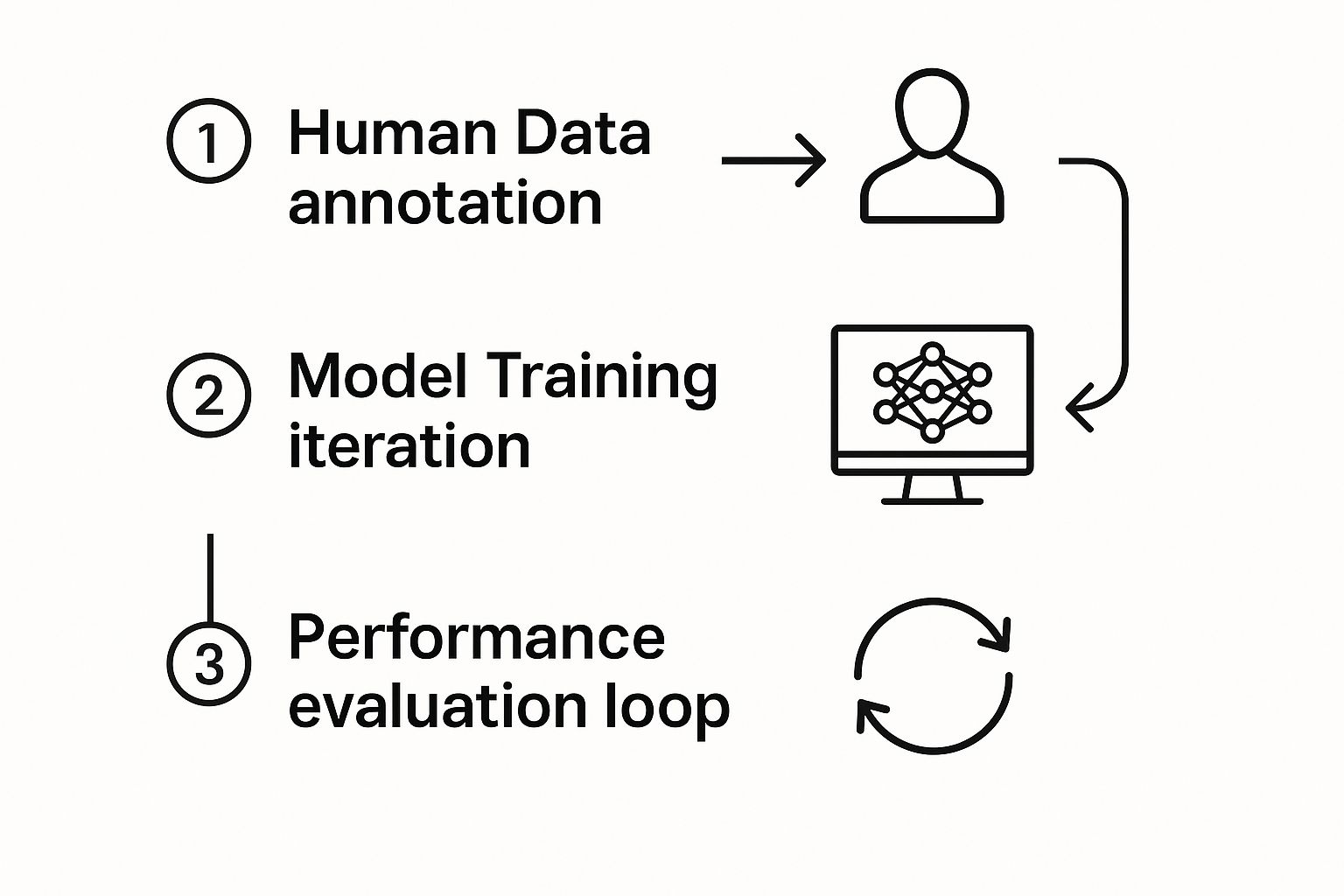

This visual breaks down the basic flow. It all starts with human knowledge, which fuels the model's training. From there, a performance evaluation loop keeps everything improving.

This constant feedback is the secret sauce. Human expertise is continually fed back into the system, making the AI smarter and more reliable with every single cycle.

The Initial Training Phase

Every machine learning model starts its life with data. In a HITL system, that first batch of data is usually prepared and labeled by human experts. You can't overstate how important this step is—the quality of this initial training data sets the baseline for the model's entire performance.

Think about medical imaging. In this first stage, radiologists might spend hours carefully labeling thousands of X-rays, teaching an AI how to spot the subtle differences between healthy and diseased tissue. This foundational work often involves specialized image annotation tools for medical AI to ensure the labels are precise. Once this high-quality dataset is ready, the model trains on it and learns the basic rules of the game.

Prediction and Confidence Scoring

Once the initial training is done, the model is put to work making predictions on new, unseen data. This is where the "loop" really kicks into gear. With every single prediction it makes, the model also calculates a confidence score, which is basically its way of saying how sure it is about its answer.

A high confidence score, like 98% certain it’s a cat, means the prediction is probably right and can be handled automatically. But a low score, say 45% certain, is a red flag. It’s the model raising its hand and admitting it needs some help.

This confidence threshold is the trigger for human intervention. It’s a brilliant way to make sure your experts spend their time on the tricky, ambiguous cases where their judgment truly matters, instead of wasting it on the easy stuff.

Human Review and Correction

When a prediction's confidence score dips below that set threshold, the system automatically flags it and sends it over to a human for a second look. The expert then reviews the data and the model’s guess, and either gives it a thumbs-up or makes a correction.

Let's imagine an e-commerce company using AI to sort customer support tickets.

- Prediction: The AI classifies a new ticket as a "Billing Inquiry" but only with 52% confidence.

- Flagging: Since that score is pretty low, the system routes the ticket to a human support agent.

- Human Review: The agent reads the ticket and instantly sees the customer is actually asking for a "Product Return Request."

- Correction: The agent changes the label to the correct category.

This correction does more than just solve one customer's problem. It becomes a brand-new, perfectly labeled piece of training data for the AI.

Closing the Loop with Retraining

This brings us to the final, and most crucial, step: feeding all those human-verified corrections back into the model. Periodically, the model gets retrained using an updated dataset that includes all the new examples provided by the human reviewers.

Each one of these retraining cycles makes the model a little bit smarter. It learns from its past confusion and gets better at handling similar situations in the future. Over time, you'll see the model's confidence scores improve across the board, which means fewer and fewer cases will need a human to step in. It’s this iterative process that allows a HITL system to constantly evolve.

This adaptive approach is a world away from a rigid, fully automated system. The table below really highlights the key differences.

Human in the Loop vs Fully Automated ML Systems

| Characteristic | Human in the Loop (HITL) System | Fully Automated System |

|---|---|---|

| Accuracy | High and continuously improving over time as it learns from human corrections. | Static; accuracy is fixed after initial training and can degrade with new data. |

| Edge Cases | Effectively handles rare and ambiguous scenarios by routing them to human experts. | Often fails on unseen or unusual data, leading to unpredictable errors. |

| Implementation Cost | Higher initial and ongoing costs due to the need for a skilled human workforce. | Lower operational costs once deployed, but higher risk of costly errors. |

| Adaptability | Highly adaptable to changing data patterns and evolving business needs. | Rigid and requires complete retraining from scratch to adapt to new information. |

Ultimately, a HITL system embraces collaboration, creating a model that not only performs well on day one but also has the built-in capacity to grow and adapt.

Real-World Applications and Business Value

The real magic of human-in-the-loop machine learning isn't found in theory; it's measured by the impact it has on the bottom line. Across industries, this blend of human insight and machine power is unlocking real value by making AI more accurate, reducing risk, and building systems we can actually trust.

Let's move past the workflow diagrams and dive into some concrete examples where this partnership is making a tangible difference. Each one shows how HITL elevates a good AI model into a great one—one that can handle the messy, unpredictable nature of the real world.

Boosting Conversions in E-commerce

The world of online retail is a brutal battleground where understanding exactly what a customer wants is everything. Think about a search for "summer dress." Is the shopper looking for a casual beach cover-up, or a formal gown for an outdoor wedding? A purely automated algorithm could easily miss the mark, serving up irrelevant results and sending a potential buyer straight to a competitor.

This is where human-in-the-loop really shines. When a model isn't sure about a query, a human reviewer steps in to correct its course. Say the AI keeps showing standard running shoes for the query "running shoes for flat feet." A person can feed it correctly labeled examples, teaching it the subtle but crucial difference in orthopedic footwear.

This constant fine-tuning leads to some powerful results:

- Higher search relevance: Customers find what they're looking for without the frustration.

- Increased conversion rates: A smooth, intuitive search experience naturally leads to more sales.

- Improved customer loyalty: Shoppers remember the sites that just "get" them and come back for more.

The business value is crystal clear. Smarter search and recommendation engines, guided by human feedback, create a superior shopping journey that directly fuels revenue. It's a fantastic example of optimizing customer experience with AI, with human intelligence pointing the automated systems in the right direction.

Advancing Diagnostics in Healthcare

In medicine, the stakes couldn't be higher. A single diagnosis can change a person's life, making accuracy paramount. Here, human-in-the-loop machine learning isn't just a nice-to-have; it's a critical safety net, especially in medical imaging. AI models are getting incredibly good at spotting potential anomalies in X-rays, MRIs, and CT scans.

But an AI might flag thousands of tiny deviations. It takes a trained radiologist to provide the essential context—to distinguish a harmless cyst from a malignant tumor. That's a judgment call that comes from years of specialized experience. The AI acts as a super-powered assistant, tirelessly scanning and highlighting areas of concern that a human eye might overlook, but the radiologist makes the final, critical decision.

By pairing the pattern-recognition speed of an AI with a physician’s diagnostic wisdom, healthcare providers can catch diseases earlier and craft more effective treatment plans. It’s a partnership that literally saves lives.

This synergy also has a clear business case for hospitals and clinics. It improves efficiency, reduces the immense risk of misdiagnosis, and showcases how powerful data-driven decision-making can be when it's grounded in human expertise. You can learn more about how it works here: https://ziloservices.com/blogs/data-driven-decision-making/

Ensuring Safety in Autonomous Vehicles

A self-driving car has to make sense of a chaotic world in milliseconds. It must correctly identify every object in its path—pedestrians, cyclists, traffic cones, and other cars—in countless different scenarios. The number of "edge cases" it might encounter is practically infinite.

That's why autonomous vehicle companies employ massive teams of human annotators. When a car's AI gets confused—is that a plastic bag blowing across the road or a small animal?—it flags the data. A human then steps in to label the complex scene with perfect accuracy. This feedback is then used to retrain the AI across the entire fleet, making every single vehicle on the road a little bit smarter and a lot safer.

This specific use of HITL delivers undeniable business value by:

- Reducing operational risk: Every human correction strengthens the AI, directly lowering the chances of a costly and tragic accident.

- Accelerating development: Human feedback helps the model master rare events far faster than it ever could on its own.

- Building public trust: A transparent, rigorous, human-led safety process is non-negotiable for getting regulatory approval and winning over a skeptical public.

Navigating Common Implementation Challenges

While human-in-the-loop ML sounds great in theory, making it work in the real world is a different story. It's not a simple plug-and-play solution. The road to getting it right is filled with some very practical, and very human, hurdles.

Successfully blending people and algorithms requires a lot of forethought. You have to tackle both the human side of the equation—managing your experts—and the technical side, building an infrastructure that doesn't grind to a halt. Let's break down what you'll be up against.

The Human Side of the Equation

The most obvious challenge? People cost money. Unlike a purely software-based solution that you can scale with more server power, a HITL system scales with headcount. Finding, training, and keeping skilled domain experts on board is a serious investment.

Beyond the budget, keeping your human reviewers consistent is a constant battle. Give the same ambiguous piece of data to two different experts, and you might get two slightly different interpretations. This kind of disagreement introduces noise into your training data, which can end up confusing your model more than helping it.

You also have to worry about annotator fatigue. Staring at the same types of tasks all day wears people down. When focus slips, so does accuracy, and the quality of your entire feedback loop is at risk.

To get ahead of these issues, you need a solid game plan:

- Clear Guidelines: Create a rock-solid, objective rulebook for how data should be labeled or reviewed. This is non-negotiable.

- Quality Assurance: Build in a second layer of review. Having some work double-checked by another expert is a great way to catch inconsistencies early.

- Workflow Optimization: Design the work to be as engaging as possible and give your team tools that reduce the mental strain.

Technical and Ethical Hurdles

On the tech side, building a good user interface (UI) for human feedback is way harder than it looks. The interface has to be so intuitive that an expert can jump in and make corrections quickly and accurately, without needing a manual. A clunky or slow UI will create a massive bottleneck and frustrate everyone involved.

The backend is just as tricky. You need a system that can automatically flag low-confidence predictions, send them to the right person, and then seamlessly feed their corrections back into the model for retraining. This feedback loop has to be fast, reliable, and ready to handle a constant stream of data. These technical components are often part of a much bigger push for efficiency, a topic we explore more in our guide to business process automation.

But perhaps the most dangerous challenge is the quiet risk of human bias. If your team of reviewers all come from a similar background, their shared unconscious biases can get baked right into the AI. For instance, a team that isn't culturally diverse might consistently mislabel slang or misjudge sentiment from demographics they're not familiar with, creating a model that is systematically skewed.

To make human in the loop machine learning a success, you have to meet these challenges head-on. By planning for the costs, designing for consistency, building user-friendly tools, and actively fighting bias, you can create a system that truly gets the best of both human and machine intelligence.

What's Next for Human and AI Collaboration?

The partnership between people and intelligent machines is getting more sophisticated every day. We're moving past the simple idea of humans just fixing AI mistakes. Now, it's about a much deeper, more integrated collaboration. The conversation has shifted from "humans versus AI" to how we can build the next wave of powerful, trustworthy systems together. It's less about replacement and more about a genuine fusion of skills.

One of the biggest forces shaping this future is the rise of synthetic data—information that's artificially created to train AI models. The AI world is staring down a major data crisis. By 2025, we're going to hit a wall where there just isn't enough high-quality, real-world data to go around. Synthetic data is a brilliant way to fill that gap.

But here’s the catch: just making up data isn't enough. It has to be realistic, relevant, and accurate. That's where human-in-the-loop comes back into the picture. People are still essential for validating this artificial data to make sure it actually improves the model instead of teaching it bad habits. For a deeper dive, humansintheloop.org has some great insights on this validation process.

A Safeguard Against Model Collapse

There's a fascinating and slightly scary phenomenon that can happen as AI models, especially large language models (LLMs), get trained on data generated by other AIs. It's called model collapse.

Essentially, the model starts to forget what real-world data actually looks and sounds like, with all its messiness and unpredictability. Its performance slowly degrades over time. Think of it like making a photocopy of a photocopy—each copy gets a little fuzzier until the final image is just a blurry blob.

Human-in-the-loop machine learning acts as a vital anchor to reality. By constantly feeding the system fresh, human-verified information and corrections, HITL stops this inward spiral. It keeps the model grounded in real human knowledge and experience, protecting its accuracy and usefulness for the long haul.

Expanding Beyond Data Labeling

The core ideas behind HITL are also breaking out of their traditional home in data annotation and finding a place in far more complex and strategic fields.

We're seeing this collaborative model show up everywhere:

- Scientific Discovery: Scientists are working with AI to sift through enormous datasets. The AI flags potential patterns, and the human researchers bring their deep expertise to figure out what those patterns mean and where to look next.

- Strategic Business Planning: An AI can run simulations for thousands of different market scenarios, but it takes a human executive to provide the strategic vision, gut instinct, and ethical judgment to make the final call.

The future of artificial intelligence is fundamentally collaborative. It’s a partnership where machines handle the scale and speed, while humans provide the wisdom, context, and ethical compass needed to solve the world's most complex challenges. This ongoing dialogue between human and machine is the key to unlocking AI's true potential.

Got Questions About Human-in-the-Loop? We’ve Got Answers.

When you start digging into human-in-the-loop machine learning, a few common questions always seem to pop up. Let's clear the air on some of the most frequent ones so you can see exactly how this approach works in the real world.

What's the Difference Between HITL and Active Learning?

It’s easy to get these two mixed up because they’re closely related, but they aren't the same thing. The simplest way to think about it is that active learning is a tactic, while HITL is the overall strategy.

Active learning is a clever technique where the model itself flags the data it’s most confused about. Instead of having a human review everything, the model specifically asks for help on the most ambiguous examples, making the training process far more efficient. It’s all about prioritizing the human expert’s time.

HITL, on the other hand, is the entire framework for human-AI collaboration. It can include active learning, but it also covers things like having humans verify the final output, perform quality checks, or monitor the model's performance over time.

So, you could say that all active learning is a form of HITL, but not all HITL systems necessarily use active learning.

How Much Human Help Do I Actually Need?

There’s no magic number here. The amount of human involvement you'll need really depends on a few key things.

- Model Maturity: Is your model brand new or has it been running for a while? A fresh model needs a lot of hand-holding, while a mature one might just need a human to check in on its trickiest predictions.

- Task Complexity: Simple tasks, like sorting pictures of cats and dogs, need less oversight. Something complex, like interpreting a medical X-ray, will naturally require a lot more expert review.

- The Cost of Getting It Wrong: If a mistake is just a minor inconvenience, you can lean more on automation. But if an error could lead to a major financial loss or a safety incident, you’ll want a human involved every step of the way.

The ultimate goal is to gradually reduce the need for human input. As the model gets smarter and more confident, your experts can shift their focus from routine checks to handling only the most challenging and unusual cases.

Isn't It Expensive to Keep Humans Involved?

It's true that there's an investment involved—you need to account for people's time and the right software tools. But framing it as just a "cost" misses the bigger picture. It's better to think of HITL as a crucial investment in quality control.

Consider the alternative. The potential cost of unleashing a fully automated, flawed model on your customers can be astronomical. We’re talking about damage to your brand’s reputation, serious financial penalties, or even safety failures.

When you look at it that way, HITL isn't an expense; it's a smart strategy for building reliable, trustworthy AI and preventing catastrophic mistakes down the line.