Ever found yourself wondering how your phone just gets what you're saying, or how your email inbox magically knows what’s junk? That’s not magic; it’s Natural Language Processing (NLP). At its core, NLP is a fascinating field of artificial intelligence dedicated to teaching computers how to read, understand, and even generate human language. It’s the essential bridge that lets machines make sense of our messy, nuanced, and context-heavy world of communication.

Unlocking the Language of Machines

Natural Language Processing is a specialized corner of both artificial intelligence (AI) and machine learning (ML). If you think of AI as the broad effort to build intelligent systems, then NLP is the part specifically focused on mastering language. It's like the difference between building a whole brain and training the specific lobes responsible for reading, writing, and speaking.

This technology is quietly humming in the background of many tools you use every single day. When you ask Siri for the weather, use Google Translate on vacation, or see a grammar suggestion pop up in your document, you're tapping into an NLP model. Its main job is to take unstructured text—the way we naturally talk and write—and give it enough structure for a computer to process and act on it.

From Hand-Coded Rules to Learning on Its Own

NLP didn't just appear overnight. Its roots go all the way back to the 1950s, when the first systems were built on painstakingly hand-coded grammatical rules. Alan Turing's famous 1950 paper, which proposed a test for machine intelligence based on conversation, set the stage. A few years later, in 1966, a chatbot named ELIZA managed to simulate a psychotherapist by using simple keyword matching, creating conversations that felt surprisingly real for the time. These early, rule-based systems were the critical first steps that paved the way for the sophisticated, data-driven methods we have today. You can dive deeper into the complete history of NLP's evolution to see just how far we've come.

At its heart, NLP is about pattern recognition. It's about teaching a machine to see the statistical relationships between words, just like we intuitively learn that "Queen" is more likely to appear near "King" than "Kingston."

Instead of being fed rigid rules, modern NLP models learn these patterns by sifting through massive volumes of text. This fundamental shift from manual programming to automated learning is what has fueled the incredible progress we've witnessed in recent years.

The Core Goals of NLP

The ambitions of Natural Language Processing really boil down to a few key objectives. These goals are the driving force behind almost every application, from simple text correctors to complex search engines.

- Understanding Language (NLU): This is all about machine reading comprehension. Natural Language Understanding (NLU) focuses on figuring out the meaning behind the words—the intent, the sentiment, the entities involved. When you tell your smart assistant, "Book me a flight to Boston," NLU is what helps it grasp your ultimate goal.

- Generating Language (NLG): This is the creative side of the coin. Natural Language Generation (NLG) is the process of producing human-like text from structured information. It’s the tech that automatically writes up a sports summary from a box score, creates a weather forecast, or drafts a personalized marketing email.

- Enabling Interaction: Ultimately, the grand vision is to create a seamless, natural conversation between people and machines. This requires both understanding what we say and responding in a way that is helpful and coherent, closing the communication gap for good.

Getting a handle on these natural language processing basics is the first step. It opens the door to understanding how machines are finally learning to master our most distinctly human trait: language.

How Computers Learn To Read Human Language

Before a computer can make any sense of a sentence, it needs to get its hands dirty and prepare the text. Think of it like a chef prepping ingredients before cooking—you can’t just throw everything in a pot. This initial preparation is called text preprocessing, and it’s all about turning messy, raw human language into a clean, structured format that an algorithm can actually work with.

This isn’t just a simple cleanup. We're talking about stripping out all the noise—random punctuation, inconsistent capitalization, and common words that don’t add much meaning. Just like you wouldn't cook with unwashed vegetables, an NLP model can't learn from raw text.

Chopping Text Into Tokens

The very first "cut" a computer makes is a process called tokenization. It’s as straightforward as it sounds: you take a string of text and chop it up into individual units, or "tokens." Usually, these tokens are words, but they could also be sentences or even characters, depending on what you're trying to accomplish.

For example, the sentence "The quick brown fox jumps" gets tokenized into five separate pieces: ["The", "quick", "brown", "fox", "jumps"]. Suddenly, a solid block of text becomes a neat list of items that a machine can count, analyze, and process one by one.

Right after that, we often remove stopwords. These are the super common words like "the," "is," "a," and "in" that appear everywhere but don't carry much semantic weight. Filtering them out helps the model focus on the words that actually matter.

Getting To The Root Of A Word

Once we have our list of important tokens, we have to deal with word variations. Take the words "run," "running," and "ran." To a human, they're all related to the same action. But to a computer, they're three distinct tokens. This is where normalization techniques come in, reducing words to their core form.

There are two main ways to do this:

- Stemming: This is a quick-and-dirty method. It uses simple rules to chop off the ends of words to get to the "stem." So, "running" and "runner" might both become "run." The downside? It can sometimes create words that aren't real, like turning "studies" into "studi."

- Lemmatization: This is the smarter, more sophisticated approach. It uses a dictionary and grammar rules to find the true root word, or "lemma." Lemmatization correctly identifies that "ran" comes from "run," and that "better" is a form of "good."

To help you see how these different preprocessing steps fit together, we've put together a small comparison.

Comparing Common NLP Preprocessing Techniques

| Technique | Purpose | Example (Input: 'running') | Best Used For |

|---|---|---|---|

| Tokenization | Breaks text into individual units (words, sentences). | ['running'] (from "He is running.") |

The foundational first step for nearly all NLP tasks. |

| Stemming | Reduces words to their root form by chopping off endings. | run |

Speed-critical applications like search indexing where precision is less crucial. |

| Lemmatization | Reduces words to their dictionary form using grammatical context. | run |

Tasks requiring high accuracy, such as chatbots or sentiment analysis. |

As you can see, the right technique really depends on whether you need speed or accuracy for your specific project.

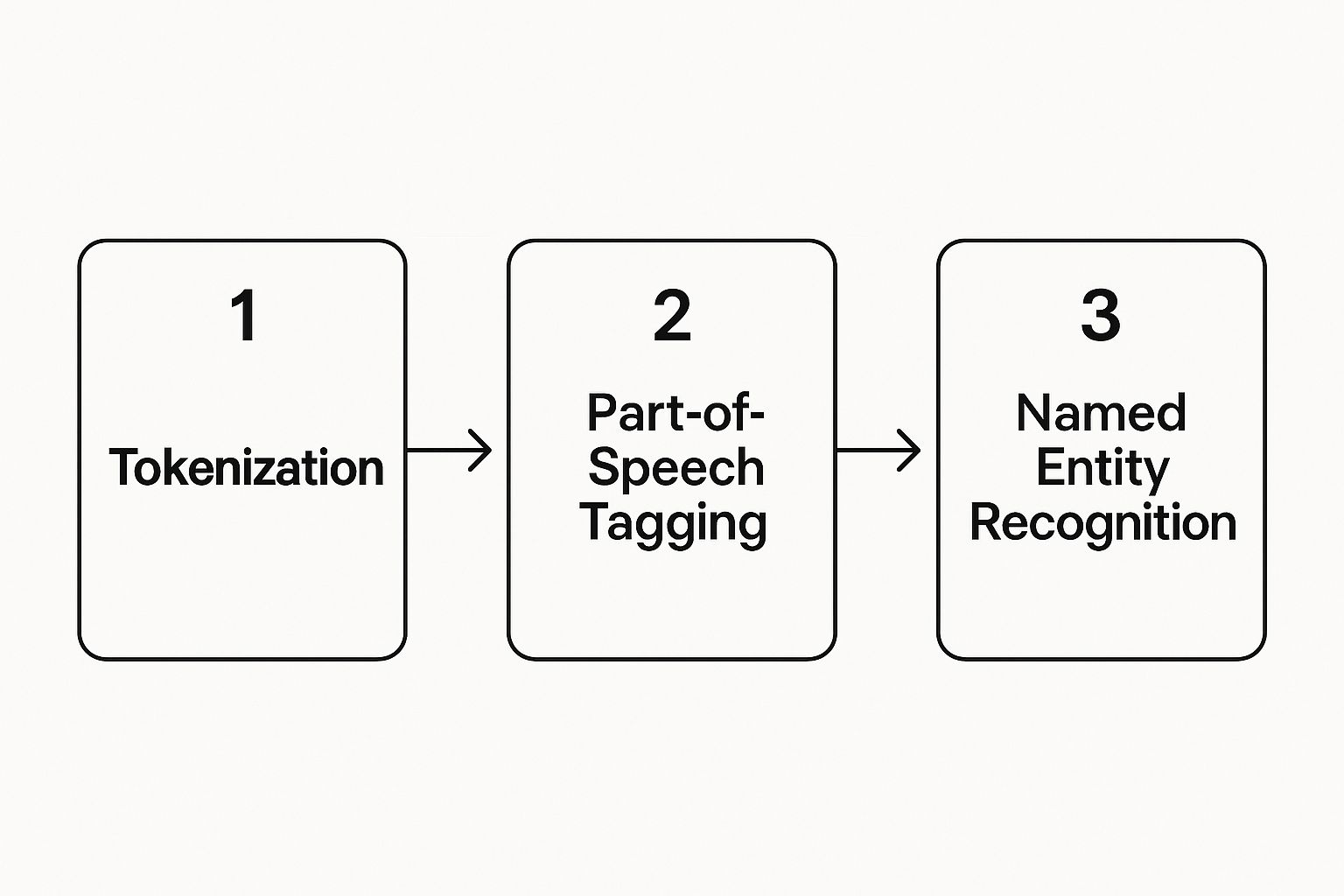

This infographic gives a great visual of how these initial steps are the building blocks for more advanced analysis.

The flow is clear: you start with tokenization and build your way up to complex tasks like Named Entity Recognition, with each step laying the groundwork for the next.

Adding Grammatical Context

After breaking the text down and simplifying the words, the next step is to figure out what role each word plays in the sentence. This is where Part-of-Speech (POS) Tagging comes into play. A POS tagger goes through a sentence and assigns a grammatical label—like noun, verb, adjective, or adverb—to every single token.

Key Takeaway: Part-of-Speech Tagging is like giving the computer a pair of grammar glasses. It helps the machine see the difference between "book" as a noun (something you read) and "book" as a verb (an action you take).

This grammatical context is absolutely vital for more sophisticated NLP tasks. The big shift away from hand-coded linguistic rules really took off in the 1980s, when statistical methods began to take over. Models like Hidden Markov Models (HMMs) started using probabilities to guess the correct POS tag based on the surrounding words, which paved the way for the powerful, data-driven models we use today.

These preprocessing and tagging steps aren't just academic exercises; they are the absolute foundation of any good machine learning model. The truth is, high-quality, clean, and well-structured data is non-negotiable. This is exactly why data annotation is critical for AI startups in 2025—the quality of your initial data directly impacts how well your final model performs. Every single step, from tokenizing a sentence to tagging its parts of speech, is crucial for building a machine that can truly understand human language.

Exploring Core NLP Techniques and Models

Once your text data is cleaned up and neatly structured, the real fun begins. This is where we shift from prepping ingredients to actually cooking the meal—turning raw words into something a machine can genuinely understand. The core techniques in NLP are all about converting text into numbers, which allows computers to spot patterns and calculate meaning in a way they couldn't otherwise.

The earliest approaches were deceptively simple but incredibly powerful, setting the stage for the sophisticated models we have today. These foundational methods revolved around word frequency, giving us a straightforward way to quantify what a piece of text was about.

From Words to Numbers

One of the first and most influential ideas in natural language processing basics is the Bag-of-Words (BoW) model. It's exactly what it sounds like. Imagine dumping all the words from a document into a bag, shaking it up, and then counting how many times each word appears. Grammar and word order are completely ignored.

All that matters is the frequency. A movie review might have the word "love" three times and "boring" once. BoW captures this as a simple numerical vector, summarizing the text based purely on word counts.

A smarter evolution of this is Term Frequency-Inverse Document Frequency (TF-IDF). This technique is more clever because it understands that not all words carry the same weight. It gives each word a score based on two key factors:

- Term Frequency (TF): This is just how often a word shows up in one specific document. If a word appears a lot, it's probably important within that document.

- Inverse Document Frequency (IDF): This measures how common or rare a word is across all your documents. Everyday words like "the" and "a" get a low score, while more unique, specific words get a high score.

By multiplying these two scores, TF-IDF effectively surfaces the words that are important to a single document but not just common fluff, giving you a much stronger signal about its actual topic.

Capturing the Meaning Between Words

While counting words is a great start, it misses something fundamental about language: context. A Bag-of-Words model sees "king" and "queen" as two totally unrelated things. This is the problem that word embeddings were created to solve.

Think of word embeddings as giving every word a specific coordinate on a giant "meaning map." On this map, words with similar meanings are clustered closely together.

Key Insight: Word embeddings give machines a sense of semantic relationships. On this map, the vector (the distance and direction) from "King" to "Queen" is almost identical to the vector from "Man" to "Woman."

This incredible feat is achieved by models like Word2Vec, which learn these relationships by analyzing which words tend to appear near each other across billions of sentences. This leap from counting words to understanding context paved the way for far more nuanced and accurate NLP applications.

If you want to see how this plays out in the real world, understanding the difference between semantic search versus keyword search is a perfect example of matching meaning instead of just words.

The Evolution to Advanced Architectures

Today's biggest NLP breakthroughs are driven by deep learning architectures built from the ground up to handle the sequential flow of language. These models have radically improved a machine's ability to grasp long-range context and subtle linguistic cues.

Two architectures in particular have defined the modern era of NLP:

- Recurrent Neural Networks (RNNs): These were an early game-changer for handling sequential data. An RNN reads a sentence one word at a time, carrying a "memory" of what it has already seen. This allows past context to influence its understanding of the current word, making it ideal for tasks like predicting the next word in a sentence.

- Transformer Models: Introduced in 2017, this architecture didn't just move the goalposts—it created a whole new field. Instead of processing words sequentially, Transformers use a mechanism called "attention" to look at all the words in a sentence at once, weighing how important each word is to every other word.

This ability to process text in parallel allows Transformers to capture incredibly complex relationships between words, no matter how far apart they are. This very breakthrough is the engine behind the large language models that power today's most advanced AI, from chatbots to sophisticated analysis tools. The journey from simple word counts to complex attention mechanisms shows a clear path toward a deeper, more human-like understanding of language.

Putting NLP to Work in the Real World

The theory behind natural language processing is fascinating, but its true magic comes alive when we see how it solves real, tangible problems. Once a computer can begin to understand human language, you can apply that skill in countless ways—many of which you probably use every day without a second thought.

This is where abstract concepts become practical, powerful tools. From keeping your inbox organized to breaking down language barriers, NLP is the invisible engine driving a much smarter and more connected world.

Keeping Your Inbox Clean and Safe

One of the oldest and most recognizable uses of NLP is the humble spam filter. At its core, this is a classic example of text classification, where the goal is to stick a label (like "spam" or "not spam") on a piece of text. The first filters were pretty basic, just looking for obvious trigger words like "free" or "winner."

Today's spam filters are in a different league entirely. They analyze thousands of signals, from the sender's reputation to the email's structure and the subtle linguistic patterns that separate a genuine newsletter from a phishing attack. It’s a constant cat-and-mouse game, with NLP models always learning to spot the latest tricks.

Understanding the Voice of the Customer

How can a global brand figure out what millions of customers really think about its new product? Reading every single tweet, review, and comment by hand is simply impossible. This is where sentiment analysis steps in, a technique that automatically figures out the emotional tone of a piece of writing.

Is that product review glowing, furious, or just plain neutral? By running sentiment analysis models across massive streams of customer feedback, companies can get a real-time pulse on public opinion. This helps them pinpoint product flaws, celebrate what’s working, and tackle customer service issues before they blow up. It turns a messy flood of unstructured chatter into sharp, actionable business intelligence.

Key Takeaway: NLP gives businesses the ability to listen at scale. Sentiment analysis moves beyond what customers are saying to understand how they feel, providing deep insights that drive better decisions.

Extracting Key Information Instantly

Imagine reading a dense legal document and having all the important names, organizations, locations, and dates automatically highlighted for you. That's the power of Named Entity Recognition (NER). NER models are trained to scan text and then identify and categorize these key pieces of information.

This technology is a cornerstone for how we find information today. Search engines use it to deliver richer, more relevant results, while news aggregators use it to group articles by the people and places involved. It’s a fundamental tool for any system that needs to quickly pull structured data out of unstructured text. For a deeper look into its many applications, you can explore our guide on https://ziloservices.com/blogs/the-power-of-natural-language-processing-nlp-applications-use-cases-and-impact-in-2025/.

More Powerful Real-World Examples

The list of NLP applications goes on and on, touching nearly every industry imaginable. The field is always moving, with new and creative uses popping up all the time.

Here are a few more game-changing applications:

- Machine Translation: Tools like Google Translate rely on incredibly complex NLP models to translate text and even spoken words across dozens of languages in near-real time. They’ve made global communication more accessible than ever before.

- Customer Service Chatbots: Many websites now have AI-powered chatbots that can answer common questions, guide you through a process, or solve basic issues. This frees up human agents to handle the more complex problems.

- Text Summarization: NLP can take long reports, articles, or research papers and distill them into concise summaries that hit all the main points. This is a lifesaver for any professional trying to stay informed on a tight schedule.

- Academic Research: Beyond everyday uses, NLP is also changing how academics work. For instance, researchers are now using AI tools for literature reviews to find, summarize, and organize vast amounts of information far more efficiently.

Each of these examples shows a different side of NLP's power. Whether it's classifying, analyzing, extracting, or even generating language, these tools are fundamentally changing our relationship with information itself.

The Rise of Large Language Models

The arrival of Large Language Models (LLMs) didn't just move the goalposts in natural language processing—it completely changed the game. Think of it this way: if older NLP models were like highly trained specialists, laser-focused on one specific job, LLMs are the brilliant polymaths. They are the engine behind tools like ChatGPT, demonstrating an uncanny ability to understand and generate human-like text on almost any topic you can throw at them.

So, what’s the secret sauce? It really boils down to two things: an almost unimaginable amount of data and a new kind of architecture built to handle it. LLMs learn by ingesting a massive portion of the internet—think books, articles, forums, and countless websites. This colossal diet gives them a deep, nuanced understanding of grammar, facts, reasoning, and the subtle art of conversation.

What Makes an LLM So Powerful?

Under the hood, an LLM is a type of neural network, but its sheer size is what truly sets it apart. The 2020s have been defined by their explosive growth. Take OpenAI's GPT-3, which made waves in 2020. It was built with an astonishing 175 billion parameters and fed a diet of hundreds of billions of words.

By 2024, the latest models have made even those numbers look small, pushing the boundaries of what's possible in text generation and dialogue. Of course, this kind of scale requires a staggering amount of computing power, with operational costs often running into millions of dollars every single month.

The real paradigm shift, however, is how LLMs learn. They introduced the world to zero-shot and few-shot learning, which means they can tackle jobs they weren't explicitly trained to do.

- Zero-Shot Learning: You can give an LLM a command like "Summarize this article into three bullet points" and get a decent result, even if it never saw a single summarization example during its training.

- Few-Shot Learning: You can guide the model by giving it just a couple of examples in your prompt. This helps it nail down a specific tone, format, or style you're looking for.

This flexibility is why LLMs are now everywhere, from powering sophisticated chatbots to acting as creative writing partners and even generating programming code. As these models become more common, learning to work with them is becoming an essential skill. In fact, understanding the different ways to adapt them, such as Customizing Language Models: Fine-Tuning vs. Prompt Engineering, is key to getting the most out of them.

Capabilities and Ethical Considerations

There’s no denying the power of LLMs. They can draft emails, write code, explain quantum physics in simple terms, and translate languages with impressive fluency. This has opened up a whole new world of possibilities for creativity and efficiency. But with that power comes a heavy dose of responsibility and some serious ethical hurdles we need to navigate.

With great power comes great responsibility. LLMs are not just tools; they are reflections of the vast, unfiltered data they were trained on. This means they can inherit and amplify human biases present in that data.

One of the biggest concerns is bias. Since LLMs learn from text written by humans, they inevitably absorb our societal biases around race, gender, and culture. If left unchecked, these models can easily perpetuate harmful stereotypes.

Another thorny issue is their tendency to generate misinformation. An LLM's prime directive is to produce text that sounds convincing. This means it can state falsehoods with complete and utter confidence—a phenomenon widely known as "hallucination."

Making sure this technology is used ethically is a massive, ongoing effort. It means building better safety guardrails, being upfront about the limitations of AI, and teaching people how to think critically about the information these models produce. As we weave LLMs deeper into the fabric of our lives, striking the right balance between their amazing capabilities and our ethical duties is more important than ever.

How to Start Your NLP Journey

Alright, you've got the theory down. Now for the fun part: turning all that knowledge into actual, working projects. Diving into natural language processing might seem intimidating, but thanks to a treasure trove of tools and resources, it's never been easier to get your hands dirty.

The first step is choosing your toolkit, and for NLP, Python is the undisputed language of choice. Its straightforward syntax and massive collection of libraries mean you can spend more time on the what and less on the how.

Essential Tools and Libraries

To get started, you really only need to get comfortable with a few key libraries. These do most of the heavy lifting for you.

- NLTK (Natural Language Toolkit): Think of this as the original workhorse of NLP in Python. It's fantastic for learning the ropes and experimenting with core concepts like tokenization, stemming, and tagging parts of speech.

- spaCy: When you're ready to build something for the real world, spaCy is your go-to. It's built for speed and efficiency, making it an industry favorite for tasks like Named Entity Recognition.

- Hugging Face Transformers: This is where things get really powerful. This library gives you access to thousands of pre-trained models, including the cutting-edge LLMs you hear so much about. It makes incredibly advanced NLP accessible to everyone.

A great way to start is by picking a simple, concrete project. Try downloading a public dataset, like IMDb movie reviews, and building a basic sentiment analysis classifier. It's a classic for a reason!

Of course, you can't do much without data. Websites like Kaggle are goldmines, offering tons of free, high-quality datasets that are perfect for practice. And when you inevitably get stuck—because we all do—online communities on Stack Overflow or Reddit are invaluable for getting help.

This combination of powerful libraries, free data, and a supportive community paves a clear path for anyone looking to build real NLP skills. If your interests lean more towards voice and speech, it's also worth looking into companies offering automated speech recognition services to see how these concepts are applied in specialized fields.

Got Questions About NLP? We've Got Answers

Diving into a new field like natural language processing always brings up a few questions. It’s a world filled with its own jargon and acronyms, so it's easy to get mixed up. Let's tackle some of the most common queries that come up.

What’s the Difference Between NLP, NLU, and NLG?

It’s easy to get these three confused, but they have distinct roles. Think of Natural Language Processing (NLP) as the entire discipline—the broad field dedicated to making computers understand and work with human language.

Under that umbrella, you have two key specializations:

- Natural Language Understanding (NLU): This is all about comprehension. NLU focuses on the input side of the equation, helping a machine grasp the meaning, intent, and context behind a piece of text. It's the "reading" part.

- Natural Language Generation (NLG): This is the creative side. NLG handles the output, taking structured information and turning it into natural, human-like text. It's the "writing" part.

A simple way to remember it: NLU reads and understands the customer's email, and NLG drafts a perfect reply.

What Does "Corpus" Mean in NLP?

In the world of NLP, a corpus (the plural is corpora) is just a fancy term for a large, organized collection of text. This is the raw material, the dataset, that you use to train and test your language models.

A corpus can be anything from every play Shakespeare ever wrote to a decade's worth of news articles or millions of product reviews scraped from a website.

The quality of your NLP model is directly linked to the quality of its training corpus. A diverse, well-annotated corpus is the bedrock of any accurate and fair NLP system.

How Can I Start Learning NLP in a Hands-On Way?

The best way to learn is by building something. Start by getting familiar with Python, which is the go-to language for almost all NLP work. From there, your first stop should be a library like NLTK to get your hands dirty with fundamental tasks like tokenization and stemming. It's great for learning the ropes.

Once you’ve got a handle on the basics, you'll want to graduate to more powerful, production-ready tools. Check out spaCy for building practical applications and the Hugging Face Transformers library to tap into the world of powerful, pre-trained models. A great first project is to build a simple sentiment analyzer for movie reviews—it’s a classic for a reason!

At Zilo AI, we specialize in creating the high-quality, expertly annotated data that fuels these sophisticated NLP models. Whether you're labeling text for sentiment analysis or sourcing multilingual data for a global product, our solutions give your AI projects the solid foundation they need to succeed. Learn how Zilo AI can support your data needs.